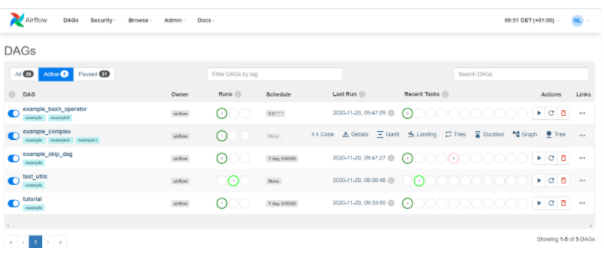

(Assuming Snowflake uses AWS cloud as its cloud provider). If you have many ETL(s) to manage, Airflow is a must-have. On the Admin page of Apache Airflow, click on Connections, and on the dialog box, fill in the details as shown below. Apache Airflow is an open-source platform to programmatically author, schedule and monitor workflows. This course pre-requisites that you have prior skills to work with datasets, SQL, relational databases, and Bash shell scripts. Tutorials for Amazon Managed Workflows for Apache Airflow. In this step of Airflow Snowflake Integration to connect to Snowflake, you have to create a connection with the Airflow. It can be integrated with cloud services, including GCP, Azure, and AWS. You’ll gain hands-on experience with practice labs throughout the course and work on a real-world inspired project to build data pipelines using several technologies that can be added to your portfolio and demonstrate your ability to perform as a Data Engineer. Airflow is a modern platform used to design, create and track workflows is an open-source ETL software.

Upon completing this course you’ll gain a solid understanding of Extract, Transform, Load (ETL), and Extract, Load, and Transform (ELT) processes practice extracting data, transforming data, and loading transformed data into a staging area create an ETL data pipeline using Bash shell-scripting, build a batch ETL workflow using Apache Airflow and build a streaming data pipeline using Apache Kafka. Learn new concepts from industry experts Gain a foundational understanding. datascience dataengineering apacheairflowIn this session Srinidhi will take us through Apache airflow with a quick demo on how to get started with itTopic. This course is designed to provide you the critical knowledge and skills needed by Data Engineers and Data Warehousing specialists to create and manage ETL, ELT, and data pipeline processes. When you enroll in this course, youll also be asked to select a specific program. You can also watch our previous Intro to Airflow Webinar. Apache Airflow is touted as the answer to all your data movement and transformation problems but is it In this video, I explain what Airflow is, why it is. If you’re new to Apache Airflow, check out the articles where we walk you through all the core concepts and components. Defining your data workflows, pipelines and processes early in the platform design ensures the right raw data is collected, transformed and loaded into desired storage layers and available for processing and analysis as and when required. In this webinar, we cover everything you need to get started as a new Airflow user, and dive into how to implement ETL pipelines as Airflow DAGs using Snowflake. Well-designed and automated data pipelines and ETL processes are the foundation of a successful Business Intelligence platform.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed